8 min read

Since the EU Artificial Intelligence Act entered into force in August 2024, companies across Europe have been forced to rethink not only how they build AI systems—but also how they maintain, audit, and repair them when they no longer meet compliance, ethical, or operational standards.

In Ireland specifically, the shift is even more structured. The Irish Government has confirmed that the EU AI Act will apply directly, and a national AI Office will be operational by August 2026 to coordinate compliance and innovation.

This means one critical trend is emerging quickly:

Companies are no longer choosing agencies simply to “build AI.” They are choosing agencies to fix AI systems across a full lifecycle.

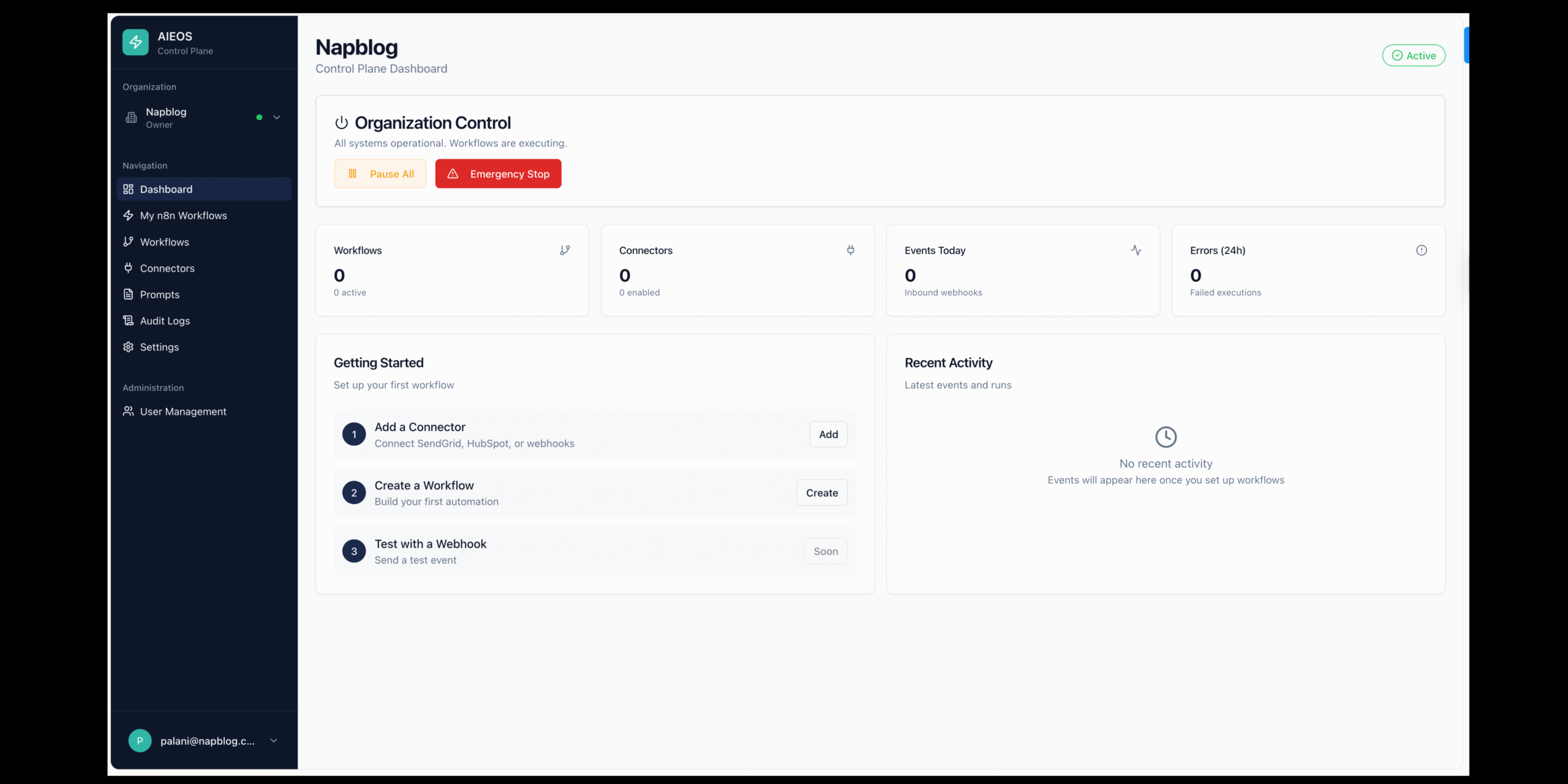

At Napblog Limited, through the AI Europe OS framework, we see a clear pattern in how European and Irish companies select agencies today. What matters most is not marketing or promises—it is whether an agency can support a structured 10-step AI lifecycle repair and compliance model.

This article explains that lifecycle in depth and how companies use it to evaluate AI agencies.

Why European Companies Are Re-evaluating Their AI Systems

Many organisations adopted AI between 2020 and 2023 without structured governance. Systems were deployed quickly in marketing automation, customer service, recruitment, pricing models, and predictive analytics. But once the EU AI Act was approved, the situation changed completely.

The regulation introduced a risk-based structure with strict obligations for high-risk AI systems, including documentation, data governance, and human oversight.

As a result, companies across Europe now face three urgent challenges:

- They do not know how many AI systems they actually use.

- Many systems lack documentation or audit trails.

- AI models trained on old or unverified data may no longer be compliant.

This is why organisations are now selecting specialised agencies—not generic digital marketing agencies—to repair their AI stack.

Why Ireland Is Becoming a Key Market for AI System Repair

Ireland is in a unique position in Europe. It hosts European headquarters for many global technology companies while also having a strong SME ecosystem. That combination creates a new type of demand: AI lifecycle compliance combined with operational optimisation.

According to official Irish government guidance, the EU AI Act applies in phases until 2027, with major obligations starting in 2026 and a national AI regulatory sandbox also launching in the same period.

Because of this timeline, Irish companies are not waiting until the final deadlines. Instead, they are actively selecting agencies that can:

- audit existing AI systems,

- restructure them before penalties apply,

- and integrate governance directly into operations.

This is where the 10-step lifecycle model becomes essential.

The 10-Step Lifecycle European Companies Use to Choose AI Agencies

Below is the exact structure companies increasingly use when evaluating AI agencies. Agencies that cannot support all 10 stages are usually not selected.

Step 1: AI System Discovery and Inventory

Before anything can be fixed, companies must understand what already exists. Many organisations are surprised when they discover how many AI tools are already active inside their operations.

Typical discoveries include:

- CRM automation powered by machine learning,

- chatbots integrated into customer service,

- predictive pricing tools,

- marketing content generators,

- internal productivity AI tools used by employees.

Consultancy firms in Ireland already highlight this as the first requirement: companies must understand their AI exposure before they can manage compliance risks.

This is why the first question European companies ask agencies today is not “What AI can you build?” but rather:

“Can you audit everything we already have?”

Step 2: Risk Classification Based on the EU AI Act

Once AI systems are identified, companies need to know which ones fall into high-risk categories.

The EU AI Act divides AI into four categories:

- unacceptable risk,

- high risk,

- limited risk,

- minimal risk.

Agencies that specialise in compliance-driven AI are now selected specifically because they can map systems into this structure.

In Ireland especially, companies prefer agencies that understand not only the EU rules but also the future Irish AI Office structure and how national regulators will interpret those rules.

Step 3: AI Gap Analysis and Technical Audit

After classification comes the real work: identifying what is broken.

Companies want agencies that can answer questions such as:

- Is the training data still valid?

- Are the outputs biased or unreliable?

- Is there proper documentation?

- Can the system explain its decisions?

This stage is one of the biggest reasons companies are replacing traditional digital agencies with specialised AI lifecycle agencies. Marketing agencies may understand automation—but they rarely understand model risk, explainability, or governance.

Step 4: Data Governance and Data Quality Repair

Most AI systems fail not because of bad algorithms, but because of poor data.

European companies now prioritise agencies that can:

- audit data sources,

- remove biased datasets,

- ensure GDPR-compliant data usage,

- rebuild training pipelines using ethical data structures.

According to Irish advisory firms, data readiness and governance are now among the most important parts of AI compliance under the EU AI Act.

That means agencies that focus only on AI tools—but not data architecture—are rapidly losing relevance in Europe.

Step 5: Model Repair and Responsible AI Redesign

Once the data is fixed, the model itself must often be redesigned.

Companies in Europe increasingly prefer agencies that use a “Responsible by Design” approach, meaning AI systems are rebuilt with:

- transparency,

- human oversight,

- ethical decision structures,

- and explainable outputs.

This stage is not about replacing the system entirely—it is about rebuilding it correctly so it can remain operational under future regulations.

Step 6: Governance Framework Implementation

The EU AI Act does not only regulate technology—it regulates organisational responsibility.

That means companies must create:

- AI governance policies,

- internal accountability roles,

- audit procedures,

- AI usage rules for employees.

Consulting firms in Ireland already emphasise that businesses must create governance structures to manage AI risk long before full enforcement begins.

As a result, companies now choose agencies that can design governance frameworks—not just software systems.

Step 7: Technical Documentation and Compliance Preparation

One of the biggest changes introduced by the EU AI Act is the requirement for detailed documentation.

For high-risk AI systems, companies must be able to demonstrate:

- how the system was trained,

- how it makes decisions,

- what risks were identified,

- how those risks were mitigated.

Many organisations cannot produce this documentation today. That is why they are selecting agencies that specialise in AI documentation engineering—a new field that barely existed two years ago.

Step 8: Deployment Repair and Human Oversight Integration

Even when a system is technically compliant, it still may not be operationally safe.

European companies are now choosing agencies that redesign how AI interacts with humans. This includes:

- human-in-the-loop approval systems,

- manual override mechanisms,

- transparent decision dashboards,

- AI literacy training for employees.

The EU AI Act explicitly requires companies to ensure that staff interacting with AI systems have sufficient AI literacy, which is now becoming a major selection criterion when choosing agencies.

Step 9: Continuous Monitoring and Automated Compliance

Fixing AI once is not enough. Systems evolve, and regulations evolve too.

That is why companies now want agencies that offer continuous AI monitoring rather than one-time implementation.

Typical services include:

- performance monitoring,

- bias detection,

- automated risk alerts,

- real-time compliance dashboards.

In Ireland especially, companies are preparing for 2026, when high-risk AI obligations become fully applicable.

This means long-term AI lifecycle support is now more valuable than short-term development projects.

Step 10: Certification, Regulatory Sandbox Support, and Long-Term Partnership

The final step is the one most agencies still ignore—but it is the most important one for European companies.

Under the EU AI Act:

- high-risk AI systems must meet conformity requirements,

- national authorities must establish regulatory sandboxes,

- companies must maintain compliance documentation continuously.

In Ireland specifically, the national AI Office will coordinate regulatory implementation and provide a central point of contact for businesses.

Because of this, companies are no longer choosing agencies based on short-term results. They are choosing long-term AI partners that can support them through the entire lifecycle.

How Irish Companies Specifically Choose AI Agencies

While the lifecycle model applies across Europe, Irish companies follow a slightly different decision logic.

They typically prioritise agencies that:

- Understand both the EU AI Act and Irish regulatory structures.

- Can support SMEs, not only large enterprises.

- Provide a practical roadmap rather than theoretical consulting.

- Focus on fixing existing systems rather than building new ones from scratch.

- Offer AI governance combined with operational efficiency.

This is why smaller specialised AI lifecycle agencies are now competing directly with large consulting firms.

The Shift From “AI Development” to “AI System Repair”

One of the biggest trends in 2026 is this:

The real opportunity in Europe is no longer building AI. It is repairing AI.

Companies already have:

- automation systems,

- generative AI tools,

- analytics models,

- internal decision-making systems.

What they need now is:

- compliance,

- reliability,

- transparency,

- governance,

- and long-term sustainability.

Agencies that understand this shift are growing quickly. Agencies that focus only on new AI projects are falling behind.

The Role of AI Europe OS in This Transformation

At Napblog Limited, the AI Europe OS framework was designed specifically to support this new lifecycle model.

Instead of focusing only on AI development, the platform focuses on:

- AI system audits,

- lifecycle compliance,

- ethical AI design,

- automation restructuring,

- and long-term AI governance.

This approach aligns directly with how European companies now evaluate agencies.

They are not looking for hype.

They are looking for structure, security, and reliability.

What the Next Five Years Will Look Like

By 2027, most AI systems in Europe will fall into one of two categories:

- Systems that were repaired and structured correctly.

- Systems that were abandoned because they could not meet compliance standards.

The companies that succeed will not necessarily be the ones that adopted AI first—but the ones that managed it correctly.

And the agencies that succeed will not be those that promised “faster automation,” but those that understood the full lifecycle from audit to certification.

Final Thoughts

European and Irish companies are entering a new phase of artificial intelligence adoption. The focus is no longer speed—it is responsibility. It is no longer about innovation alone—it is about sustainability, compliance, and long-term trust.

The agencies that win in this market will be those that can guide companies through a complete 10-step AI lifecycle repair model, from discovery to certification.

At Napblog Limited, through AI Europe OS, we believe this shift represents one of the biggest opportunities in the European digital economy over the next decade.

Because the future of AI in Europe will not be defined by how quickly it is built.

It will be defined by how responsibly it is fixed.