Last updated: May 29, 2026

7 min read

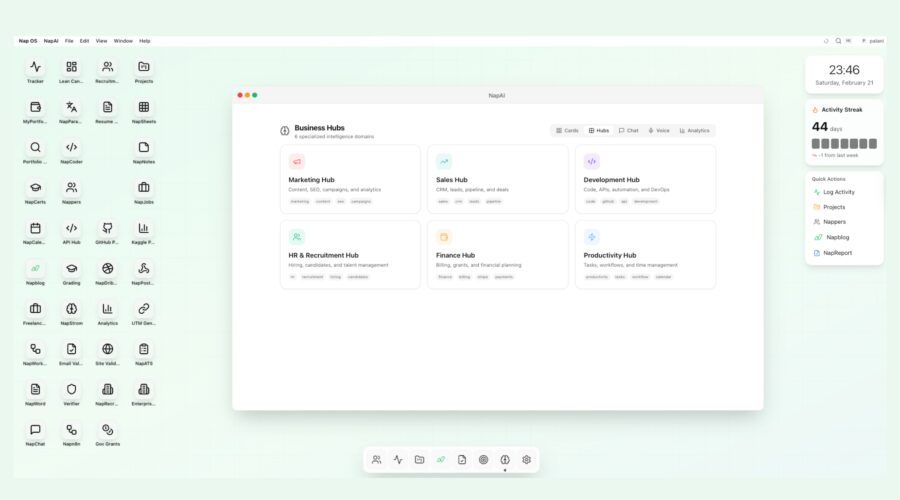

The future of work is no longer about fragmented tools. It is about unified intelligence. Professionals today juggle dozens of platforms—project management apps, CRMs, resume builders, analytics dashboards, chat assistants, document repositories—yet none of them truly understand the user’s full context. This is the fundamental problem Nap OS solves.

At the center of Nap OS sits the AI Intelligence Hub—a unified intelligence architecture that connects skills, workflows, knowledge, and execution into a single operating layer. Powered by Claude API, Retrieval-Augmented Generation (RAG), advanced Large Language Models (LLMs), and scalable AWS cloud infrastructure, Nap OS delivers contextual, domain-specific intelligence that adapts to users in real time.

This article explores how the Nap OS AI Intelligence Hub works, how Skills Cards create structured capability intelligence, and how the Native Knowledge Base transforms static data into dynamic insight.

1. The AI Intelligence Hub: A Domain-Centric Architecture

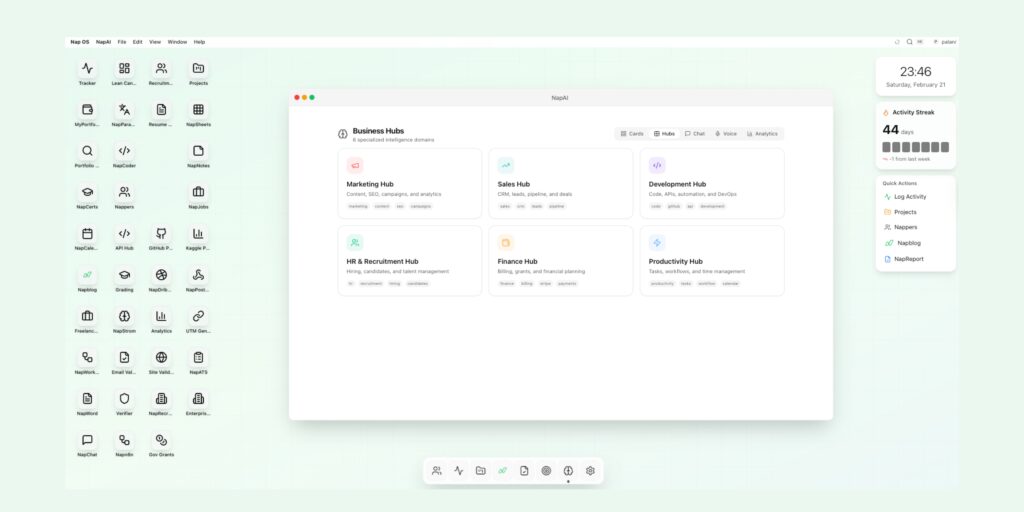

Unlike generic AI chat interfaces, Nap OS is architected around Business Hubs—Marketing, Sales, Development, HR & Recruitment, Finance, Productivity, and more. Each hub functions as a specialized intelligence domain.

Rather than treating AI as a standalone chatbot, Nap OS embeds intelligence inside operational modules. When a user enters the Marketing Hub, the AI understands campaign metrics, SEO context, and brand positioning. In the HR Hub, it understands candidate evaluation, job descriptions, and screening criteria.

This domain-centric design creates three strategic advantages:

- Contextual Awareness – AI responses are grounded in hub-specific data.

- Action-Oriented Intelligence – Outputs translate directly into tasks, documents, or system updates.

- Workflow Continuity – AI operates within operational flow, not outside of it.

The Intelligence Hub is not a feature—it is the system backbone.

2. Skills Cards: Structured Capability Intelligence

Traditional resumes and profiles are static representations of capability. They list experiences but fail to translate them into measurable, dynamic skills. Nap OS introduces Skills Cards—a structured, evolving intelligence layer that quantifies and operationalizes skills.

What Are Skills Cards?

Skills Cards are AI-structured skill profiles that combine:

- Competency level analysis

- Project history mapping

- Performance metrics

- Certification validation

- Behavioral indicators

- Peer and employer validation

- Real-time skill activity signals

Each Skills Card acts as a micro-intelligence module. It is not merely a label like “Python Developer” or “Digital Marketer.” Instead, it becomes a living object with metadata, usage history, and performance signals.

Dynamic Skill Intelligence

Powered by LLM analysis and contextual scoring, Skills Cards update automatically when users:

- Complete projects

- Upload certifications

- Contribute to repositories

- Execute campaigns

- Perform tasks inside hubs

- Receive peer evaluations

This creates a dynamic skills graph across the Nap OS ecosystem.

AI-Driven Skill Validation

Claude-powered evaluation models analyze:

- Document submissions

- Portfolio entries

- GitHub contributions

- Campaign analytics

- Writing samples

- Technical solutions

The system assesses quality signals rather than keyword presence. This enables objective skill inference, reducing bias and increasing trust.

Skills-to-Opportunity Matching

Within NapJobs and HR & Recruitment Hub, Skills Cards drive:

- Candidate ranking algorithms

- Intelligent job matching

- Automated shortlist generation

- Gap analysis recommendations

- Personalized upskilling pathways

This transforms recruitment from resume filtering to capability intelligence.

3. Native Knowledge Base: From Storage to Understanding

Most platforms treat knowledge as storage. Nap OS treats knowledge as intelligence.

The Native Knowledge Base (NKB) is an integrated repository that connects:

- User-generated documents

- Organizational knowledge

- Hub data

- Public domain references

- Uploaded files

- Notes and project artifacts

Instead of operating as a document library, the NKB is embedded into the AI Intelligence Hub via RAG architecture.

Retrieval-Augmented Generation (RAG) Explained

RAG combines:

- A vector database for semantic search.

- Context retrieval mechanisms.

- LLM-based response generation grounded in retrieved content.

When a user asks a question, Nap OS:

- Searches the vectorized knowledge base.

- Retrieves contextually relevant documents.

- Injects them into Claude’s prompt.

- Generates grounded, citation-aware responses.

This prevents hallucination and ensures domain-aligned answers.

Context Preservation

The Native Knowledge Base enables:

- Organizational memory

- User-specific memory

- Project continuity

- Cross-hub knowledge linking

For example:

A marketing strategy document uploaded in the Marketing Hub can inform budget projections in the Finance Hub automatically.

This is contextual intelligence at scale.

4. Claude API Integration: Advanced Reasoning at the Core

Nap OS leverages Claude API for advanced reasoning, summarization, evaluation, and generation capabilities.

Why Claude?

Claude models are known for:

- Long-context processing

- Structured reasoning

- Safe and aligned responses

- Analytical depth

In Nap OS, Claude handles:

- Multi-document synthesis

- Skill assessment reasoning

- Campaign optimization recommendations

- Code analysis

- Policy interpretation

- Strategic planning outputs

Claude does not operate alone. It functions within the RAG framework to ensure all outputs are grounded in real data.

Structured Prompt Engineering

Nap OS uses dynamic prompt templates including:

- Role-based context injection

- Hub-specific domain constraints

- Structured output formatting

- Metadata tagging

- Confidence scoring

This ensures responses are not generic but operationally executable.

5. Large Language Model (LLM) Orchestration Layer

The AI Intelligence Hub is not dependent on a single model. It uses a layered LLM orchestration architecture:

- Primary Reasoning Model (Claude API)

- Embedding Models for Vector Search

- Lightweight Models for Quick Classification

- Evaluation Models for Skill Scoring

- Guardrail Systems for Safety & Compliance

This orchestration enables:

- Cost efficiency

- Speed optimization

- Accuracy enhancement

- Domain specialization

The system dynamically selects the appropriate model for the task.

6. AWS Infrastructure: Scalability and Reliability

Nap OS runs on AWS cloud infrastructure to ensure:

- Horizontal scalability

- Secure data isolation

- High availability

- Elastic compute allocation

- Enterprise-grade compliance

Key AWS Components

- Amazon EC2 / ECS – Compute orchestration

- Amazon S3 – Secure document storage

- Amazon RDS – Structured relational data

- AWS Lambda – Event-driven workflows

- Amazon OpenSearch – Vector search implementation

- IAM & KMS – Identity and encryption management

This architecture supports:

- Real-time inference

- Multi-tenant enterprise deployments

- High throughput AI requests

- Secure enterprise adoption

7. Intelligence Across Business Hubs

The AI Intelligence Hub integrates seamlessly into Nap OS operational domains:

Marketing Hub

- Campaign performance analysis

- SEO strategy generation

- Automated content ideation

- Conversion optimization modeling

Sales Hub

- Lead scoring using skill alignment

- CRM enrichment

- Deal probability forecasting

- Personalized outreach scripts

Development Hub

- Code review automation

- API documentation generation

- DevOps pipeline insights

- Technical skill validation

HR & Recruitment Hub

- AI-based candidate shortlisting

- Skills gap mapping

- Behavioral analysis insights

- Interview question generation

Finance Hub

- Budget forecasting

- Expense categorization

- Grant application drafting

- Financial scenario modeling

Productivity Hub

- Workflow automation

- Smart task generation

- Calendar intelligence

- Activity streak analytics

Each hub becomes an intelligent environment rather than a static dashboard.

8. Knowledge Graph & Skill Graph Integration

Nap OS connects Skills Cards and the Native Knowledge Base into an internal knowledge graph.

This enables:

- Cross-domain recommendations

- Multi-skill clustering

- Organizational capability mapping

- Team composition optimization

- Internal talent mobility suggestions

For example:

If a project requires data visualization expertise and marketing strategy, the system identifies users whose Skills Cards intersect those nodes.

This turns Nap OS into a strategic talent intelligence platform.

9. Security, Privacy, and Responsible AI

Enterprise AI adoption requires trust.

Nap OS ensures:

- End-to-end encryption

- Role-based access control

- Data segmentation

- Audit logging

- Model output monitoring

- Human override capabilities

RAG ensures that AI outputs are grounded in user-authorized data only.

No external training occurs on proprietary content.

10. Competitive Differentiation

Most AI platforms fall into one of three categories:

- Standalone chatbots

- Isolated productivity tools

- CRM or ATS platforms with basic AI add-ons

Nap OS differentiates by integrating:

- Structured Skills Intelligence

- Native Knowledge Architecture

- Domain-Specific AI Hubs

- RAG-powered contextual reasoning

- Enterprise AWS infrastructure

It is not a chatbot embedded in a dashboard. It is an intelligence layer embedded in an operating system.

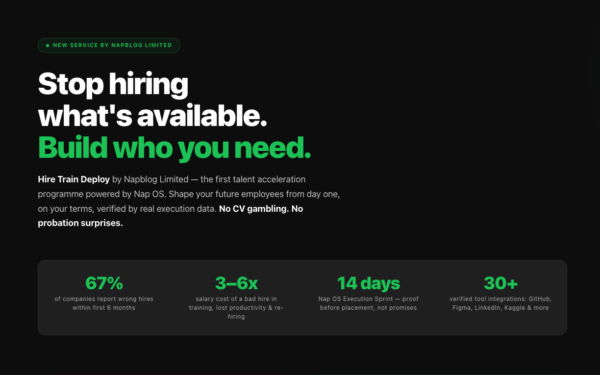

11. Real-World Impact

For Students:

- Clear skill validation

- Portfolio-backed credibility

- Intelligent career path guidance

For Employers:

- Verified capability intelligence

- Automated shortlisting

- Reduced hiring bias

For Teams:

- Knowledge continuity

- Cross-functional intelligence

- Automated documentation

For Enterprises:

- Centralized AI governance

- Secure scalable architecture

- Productivity acceleration

12. The Strategic Vision

Nap OS envisions a world where:

- Skills are measurable.

- Knowledge is searchable and contextual.

- AI is embedded, not external.

- Intelligence operates in real time.

- Every action contributes to a dynamic capability profile.

The AI Intelligence Hub is the neural core.

Skills Cards are the synaptic nodes.

The Native Knowledge Base is the memory layer.

Claude API provides reasoning.

RAG provides grounding.

AWS provides scalability.

Together, they form a true operating system for modern professionals.

Conclusion

Nap OS AI Intelligence Hub represents a structural evolution in how intelligence is deployed within digital ecosystems. By combining Skills Cards, a Native Knowledge Base, Claude API reasoning, RAG architecture, and AWS cloud infrastructure, Nap OS transcends the limitations of standalone AI assistants.

It moves beyond conversation into execution.

Beyond data into understanding.

Beyond resumes into measurable skills.

Beyond storage into intelligence.

In a world saturated with tools, Nap OS does not add another application. It creates an intelligent operating layer that unifies capability, knowledge, and workflow into one seamless system.

This is not incremental innovation.

It is architectural transformation.

Nap OS is where structured skills meet contextual AI—at scale.